How Inflow Improves Conversions Through A/B Testing with Flagsmith and Mixpanel

Inflow is a mobile app that helps people manage their ADHD. They provide support through psychoeducation, habit development and community.

Flagsmith is a Feature Flag, Remote Config and AB testing engine. Inflow have been using Flagsmith as part of their core feature flagging workflow for staged releases of features, simple role-based access control (RBAC) and performing A/B tests within the mobile app.

Inflow wanted to start performing A/B and Multi-variate user testing in order to gain a better understanding of, and optimise the experience for their product and their users. They chose to use a combination of their existing tools. Flagsmith and Mixpanel to achieve this.

Technical setup

The Inflow platform is written using React Native on the Front end and Node.js powering their API. Inflow already had Mixpanel integrated into their stack, and were using it to track their user behaviour in order to optimise and better understand their product.

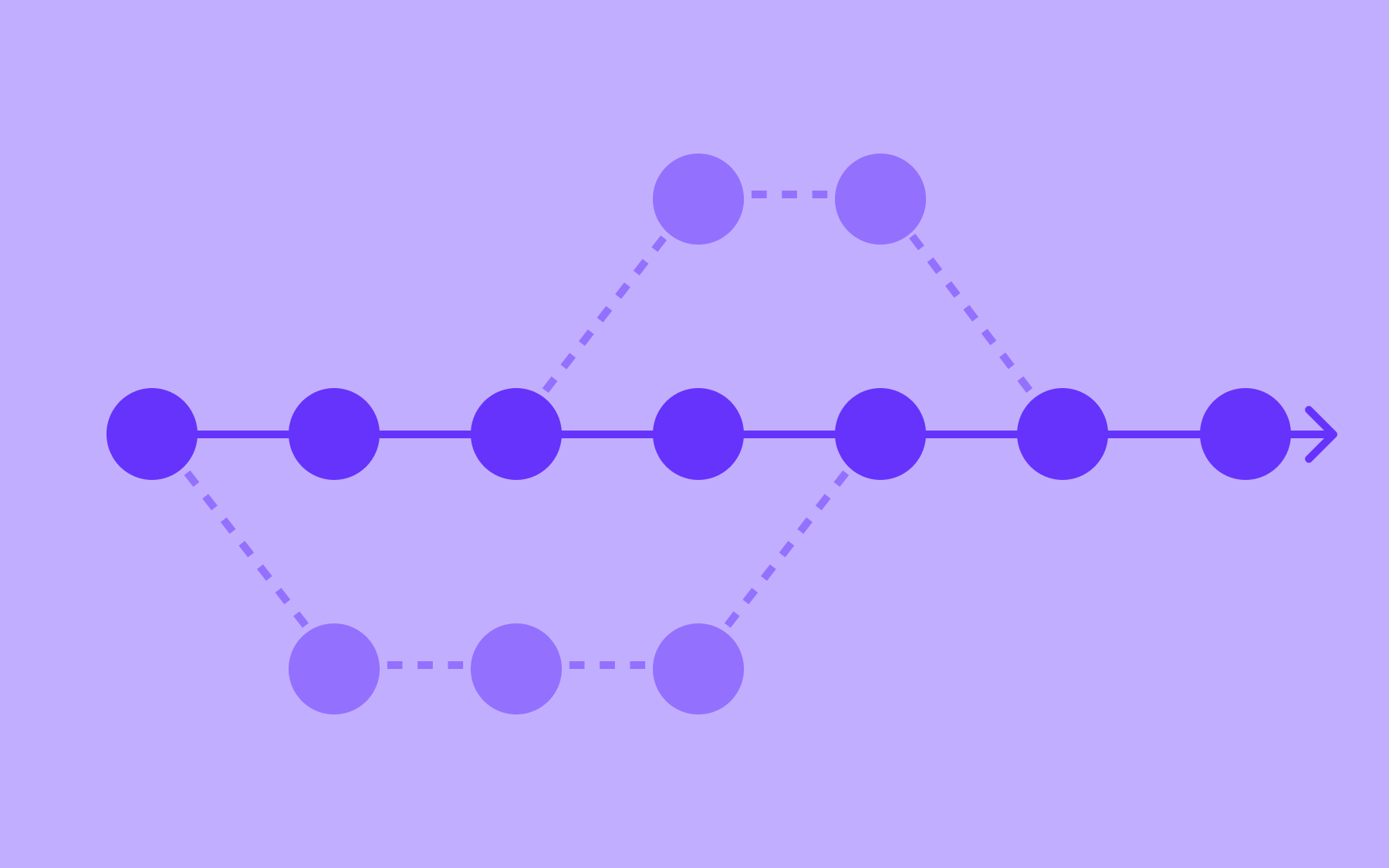

Inflow implemented a 50/50 A/B split test using the Flagsmith platform to perform the user bucketing bucketing. On first app start the Inflow app generates an anonymous ID and uses that as the Flagsmith Identity. Once the user registers, this ID becomes associated with the user account allowing for Inflow to consistently split-test across the registration barrier.

Segments are set up in Flagsmith to automatically perform the bucketing. The flag states are cached locally within the app, and all flag states are sent up to Mixpanel with each event, so it is known under what conditions that event occurred. Flagsmith already provides integrations with Mixpanel (and many other platforms). The integration streams these flag values into Mixpanel automatically behind the scenes. Mixpanel funnels are then split on the flag value contained within the event, to assess the impact of the split test.

Simple RBAC is used to allow Inflow’s content creators to access ‘Coming Soon’ modules. A flag is created for access that is off by default with an override for our segment of content creators that we manage from the Flagsmith dashboard. This allows Inflow to run QA on new app content in production before switching it on for all users in our CMS.

Gathering results

Seeing this in action and running the A/B tests is both exciting and rewarding. To give a taste of tests and results from Inflow’s work, here are a few real world examples. For each example the scientific method was used as the basis for setting up the test.

Now let’s jump right into the process and the experiments.

Experiment 1

Hypothesis: Members don’t have the validation that the app will be helpful before signing up

Experiment: Add a 3-5 second animation of member feedback we’ve received when members open the app for the first time.

Outcome measured by:

- Proportion of members who tap the ‘Get started’ button

Conclusion:

- True = with animation

- False = without animation (control)

- True: 468 opened app -> 402 tapped Get Started (86%)

- False: 468 opened app -> 387 tapped Get Started (83%)

- True wins with 92% probability

Experiment 2

Hypothesis: people don’t know what to do on the first page

Experiment: Include text below the image vs no text

Outcome measured by:

- Proportion of people who tap the ‘Get started’ button after the first app open

Conclusion:

- True = include text (Version 1)

- False = no text (Version 2 / control)

- True: 847 first app opens -> 574 Get Started press (68%)

- False: 884 first app opens -> 598 Get Started press (64%)

- ‘True’ version converted 6% better than the ‘False’ version. ‘True’ version is the winner with 94% certainty.

Where to go from here?

Once Inflow had seen their first few experiments show clear and actionable results they began the process over again; new observations and hypotheses, new experiments. A couple of other user flows/areas that are being considered for AB testing right now are:

- Adding in lifetime pricing

- Adding in a video during onboarding to provide more information about our product offering

The takeaway

Inflow was able to easily incorporate A/B testing into their development process utilizing Flagsmith’s segments and native integration with their analytics platform Mixpanel. The ability to A/B test allowed them to test both small changes or larger features, and get real measurable feedback. This allows them to continually improve the app and help make a positive impact on more people managing their ADHD.

.webp)

.png)

.png)

.png)

.png)

.png)

.png)