Introducing Multivariate Feature Flags to enable seamless AB Testing and Canary Deployments

We’re really excited to release our latest major platform upgrade: multivariate flags. We’ve spent a lot of time and effort designing and building out this feature, and we hope you like it!

So what are multivariate flags? In their simplest form, they are flags where the value is selected from a predefined list. That’s it. So what’s the fuss all about? Well, there are two core use cases for multivariate flags:

- They can be used to drive percentage split A/B/n tests!

- Staged rollouts.

- Overriding a flag value by selecting from a defined list. This can reduce errors when working across environments, as well as managing these string values in your code.

OK, let’s look at creating a multivariate flag. You create them just like a regular flag. You can provide the first variate value, then just click the “Add Variation” button to include additional variations.

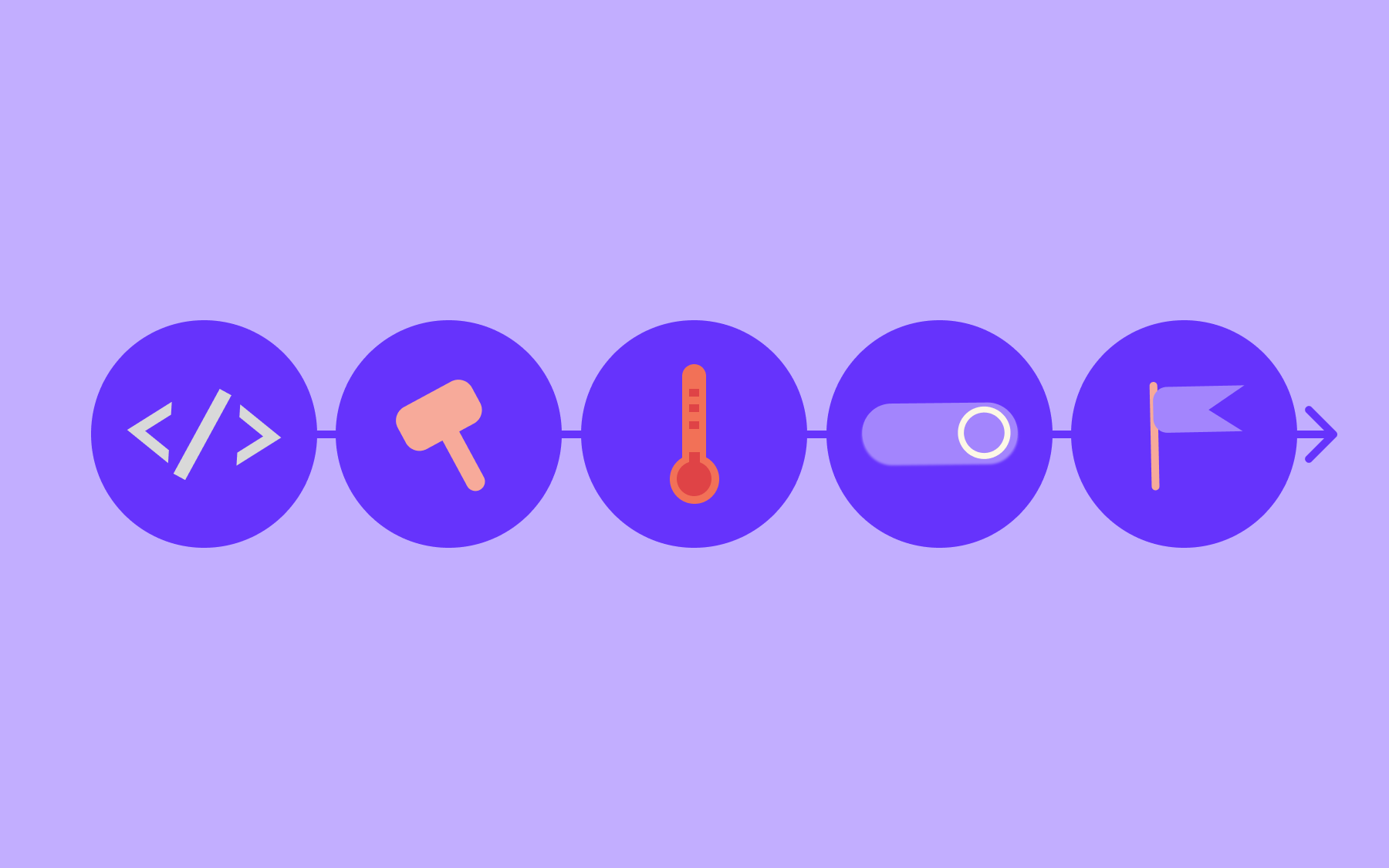

There are two ways to return variations of a multivariate flag, as opposed to the control:

- Using Identity and Segment overrides

- As part of an A/B Test

Specifying a Variation with User and Segment Overrides

You can leave the Environment Weights for all the Variants as 0%. In this situation, all Flag evaluations for “header_size” will return the control value.

At the same time, you can override the variate value against both Identities and Segments.

Overriding a value against an Identity:

You can use these Variates to encapsulate lists of values and then use Segments to power these lists.

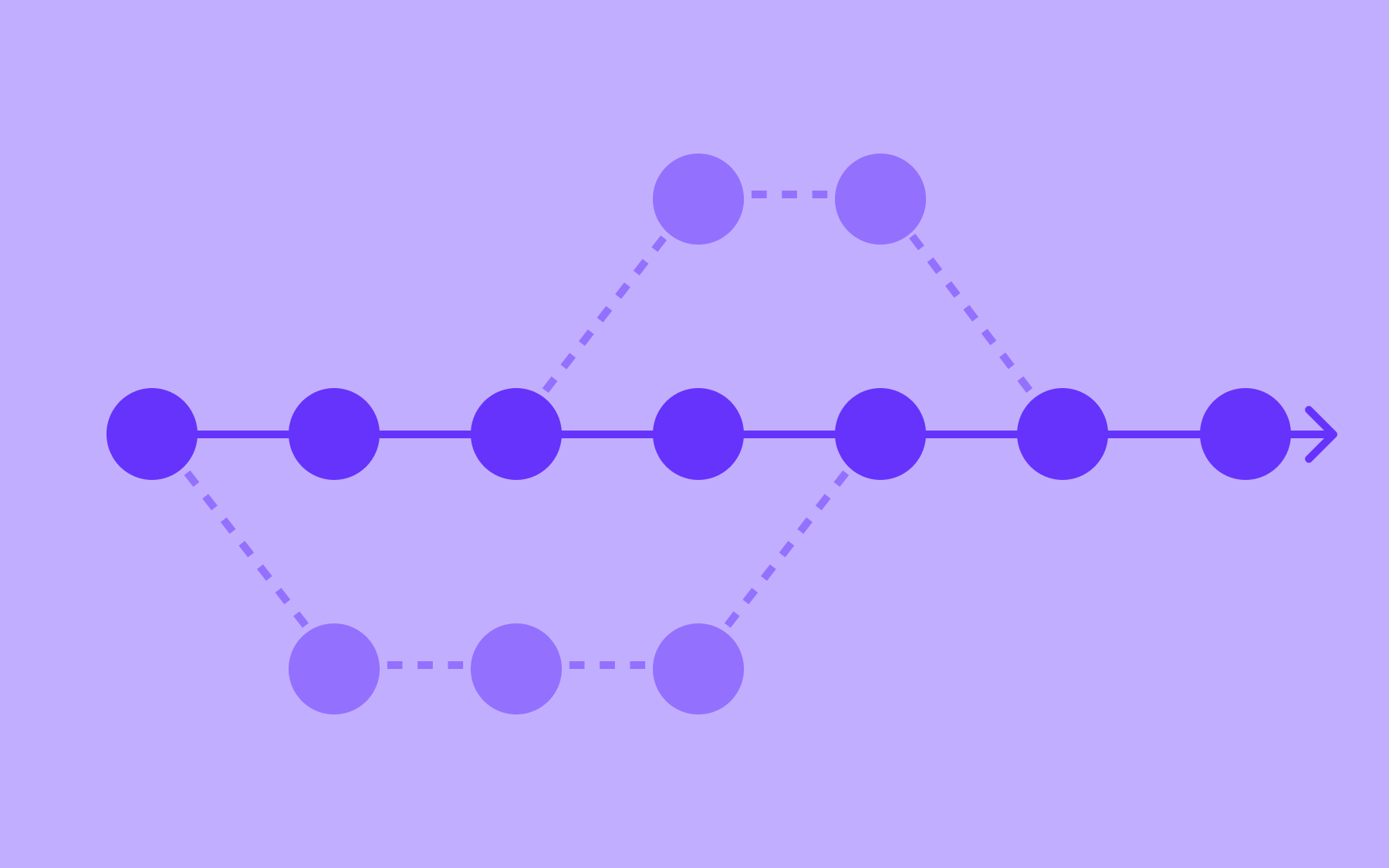

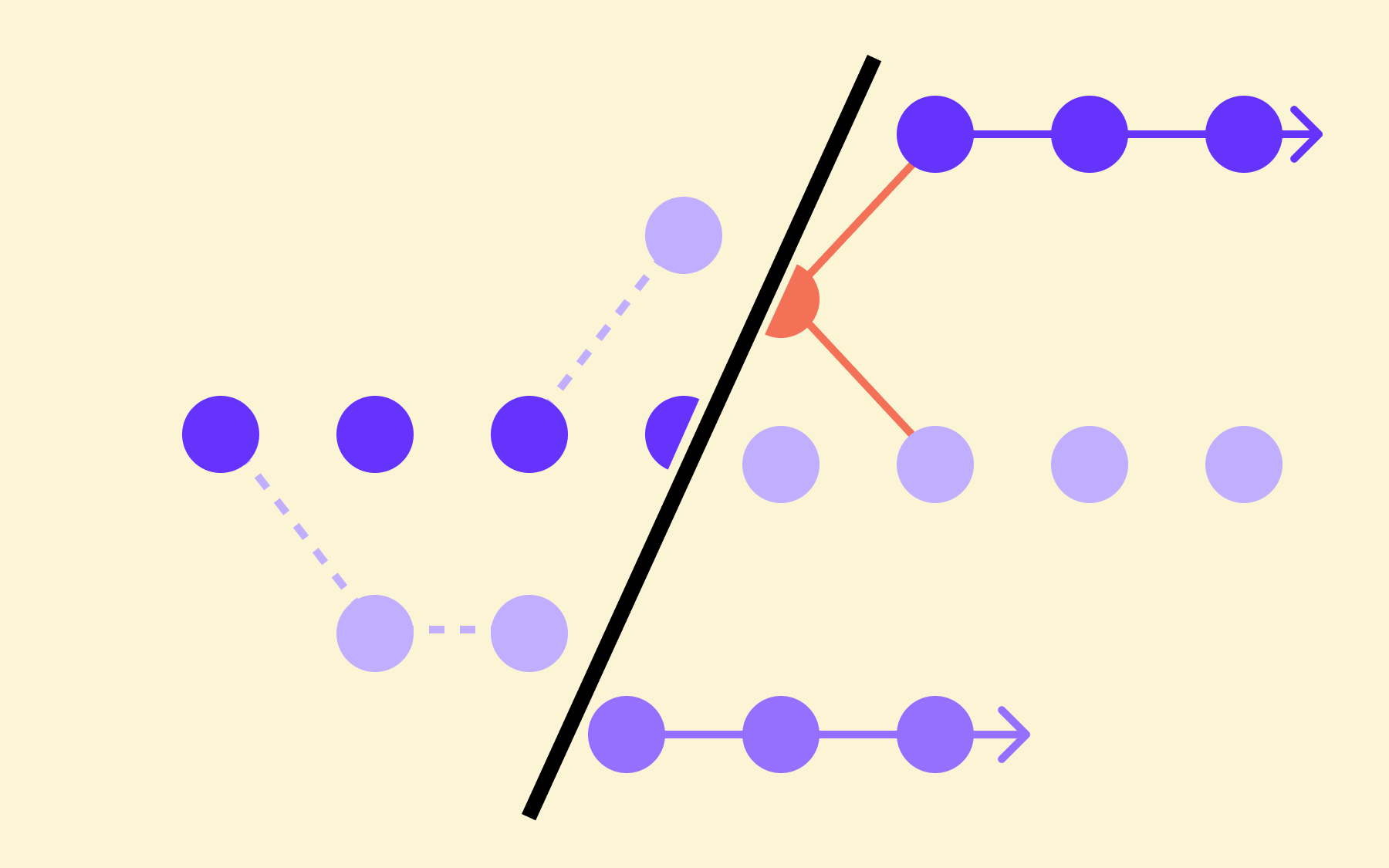

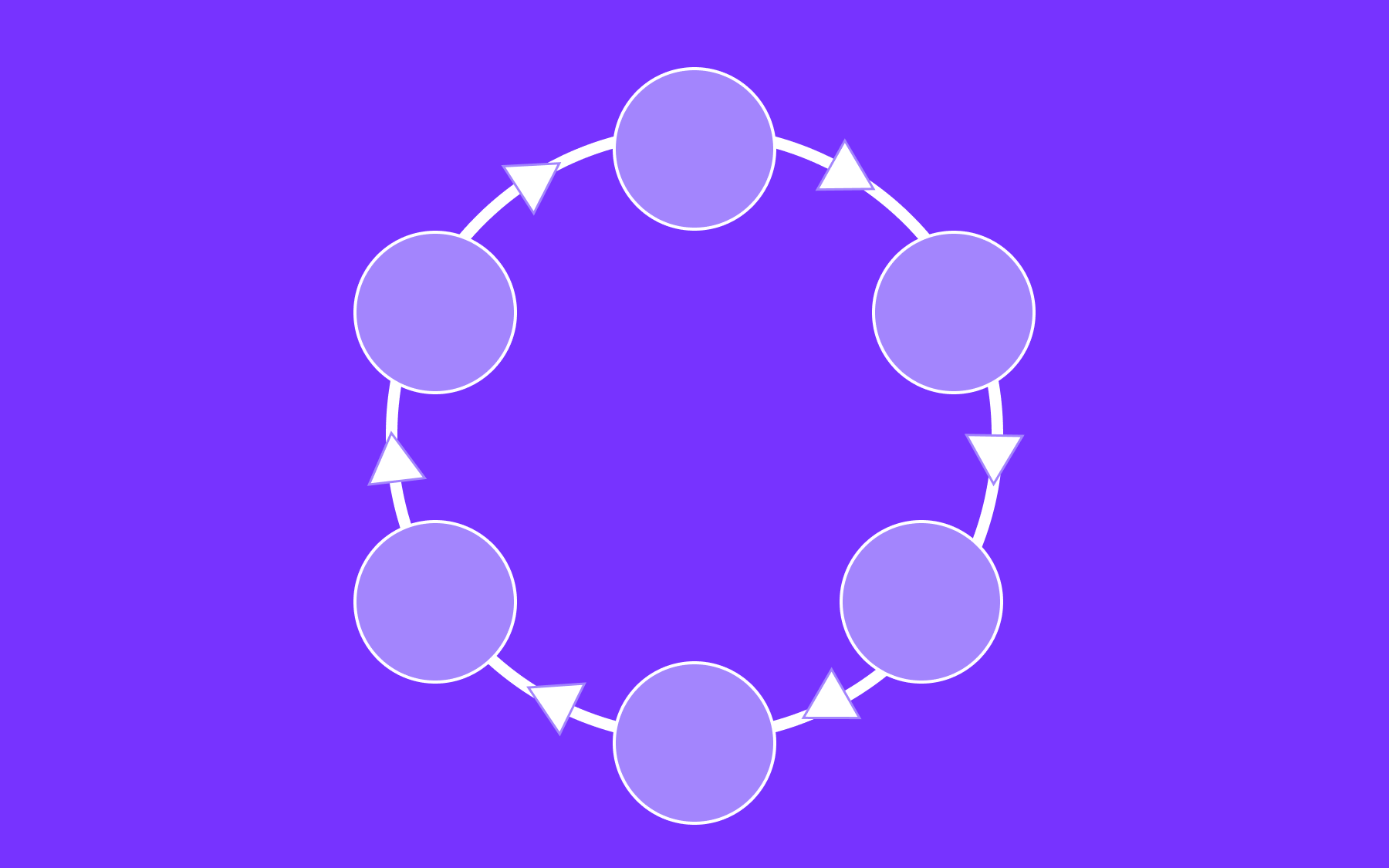

Using Environment Weights to run A/B/n Tests

As it stands, within this Environment, calling getValue(“header_size”) without an Identity will return “normal”, regardless of what the Environment Weights have been set to. The Environment Weights only come into effect when you get the Flags for an Identity.

These weights work by splitting your users up into randomised groups. In the example above, roughly 50% of our user population will receive the control value normal. Roughly 25% will receive “large” and the remainder will receive “humongous”.

You can manage different Environment Weights across each Environment within your Project.

Powering complex A/B/n tests with control groups

Where multivariate flags really come into their own is being used to power both A/B/n tests and multiple A/B Tests on a single page. As mentioned above, you can easily provide weightings for variants when you are creating or editing your flags.

Let’s imagine we are running an ecommerce store, and want to test some different copy for the checkout button. So we create a flag like this:

We don’t want to run this test against all our customers; we only want to test 15% of the population. Hence we have the control value resulting in 85% of the weighting. This flag will return “Check Out” for 90% of our users, “Let’s buy this thing!” for 5%, “CHECK OUT NOW” for another 5%, and “Proceed to Check Out!” for the remaining 5%.

We can then get to coding up our new Checkout button. We can grab our flag using a Flagsmith SDK, and just get this flag value to use as the button text. We will also set up our analytics platform to integrate with Flagsmith. Doing this sends all our flag values on to the downstream analytics platform.

As a result, whenever a customer comes to check out a purchase, 85% will see no change, but 3 groups of 5% will see the new button copy. Crucially, our analytics platform will also have the relevant value shown to the user, as a user property for this customer.

Once we have this data, we can run a confidence test on the customers that saw the different button copy, whether they clicked on it, and how their behaviour changed.

Try it out!

At this point you are probably excited to start using this amazing feature, and since we think this is pretty useful, we included multivariate flags in all of our plans, even for our open source and free users. Please let us know what you think and share some of the amazing use cases with us.

-Ben

.webp)

.png)

.png)

.png)

.png)

.png)

.png)